AI-Driven Automated Structure Mapping for Flood Risk Assessment in Treasure Beach, Jamaica

Abstract

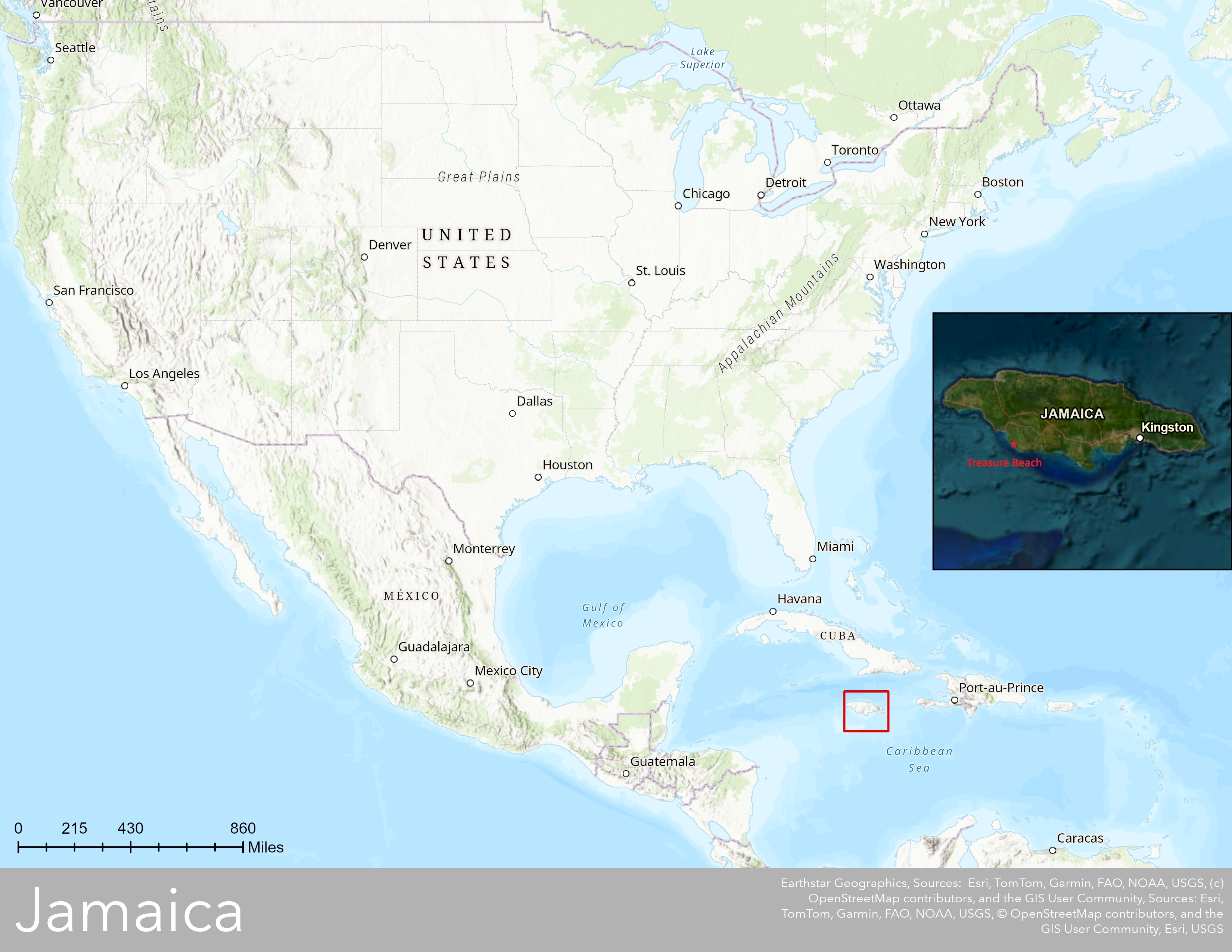

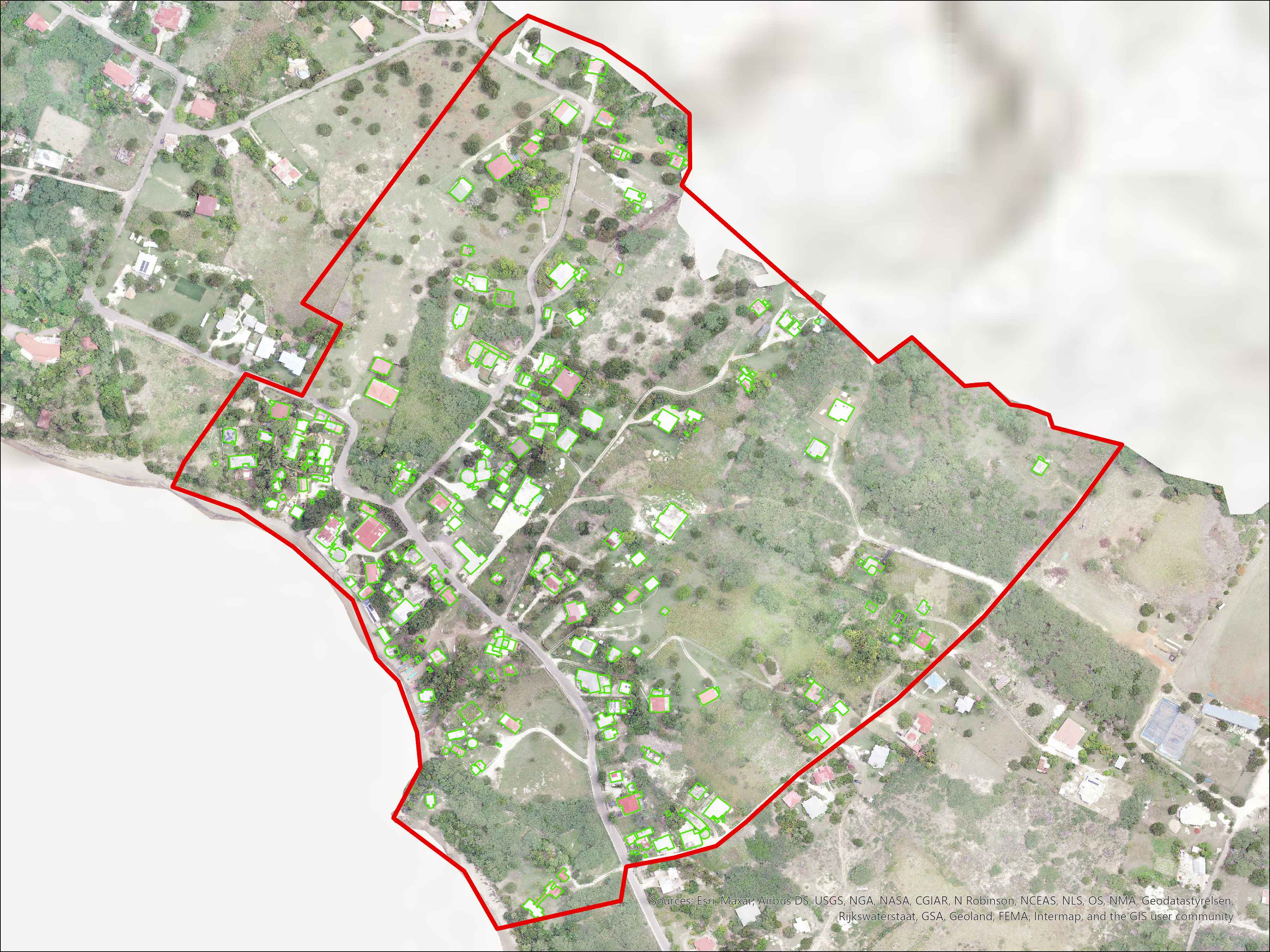

This study explores the application of deep learning in ArcGIS Pro to automate the delineation of structures in Treasure Beach, Jamaica, as part of a flood risk assessment. Drone imagery was analyzed using two tools: Extract Features Using AI Models and Detect Objects Using Deep Learning. The latter tool provided better performance and allowed for more advanced parameter tuning. A hybrid model training approach was adopted using both single-class and multi-class data. Optimized parameters included a tile size of 256 and padding of 64. Among five tested models, the most accurate one detected 89.37% of actual structures while reducing false positives and false negatives. Issues such as object misclassification and over-segmentation were addressed using post-processing steps like the Dissolve Boundaries tool. Overall, the study demonstrates that deep learning can significantly improve the efficiency and accuracy of structure mapping in support of flood preparedness efforts. Drone imagery was acquired in June 2024 at Treasure Beach, Jamaica for the purpose of mapping flood risk in this community on the southern coast of Jamaica. As part of quantifying flood risk there is a need to digitize and map all the structures in the field area. Our goal was to automate the process of digitizing the structures using AI and Deep Learning tools available in ArcGIS Pro. Specifically, we investigated how to optimize various parameters in these tools to maximize the number of correctly identified structures and how accurately the perimeter of structures was mapped.

Introduction

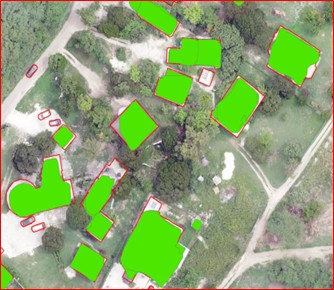

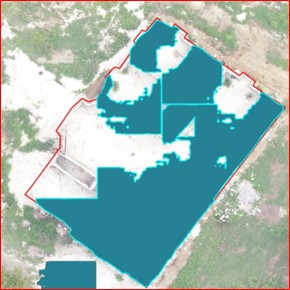

The area of interest was outlined in red, 207 structures were mapped manually for comparison. This research addresses a subsidiary question of how to efficiently delineate all structures within the Treasure Beach community (i.e., all the structures captured on the drone imagery), which is necessary to assess the risk posed to each structure by flooding. Our primary goal in this poster is to evaluate the completeness and accuracy of Artificial Intelligence / Machine Learning tools in automating the delineation of structures and report on how best to optimize those tools to maximize completeness and accuracy.

Study Area

Data

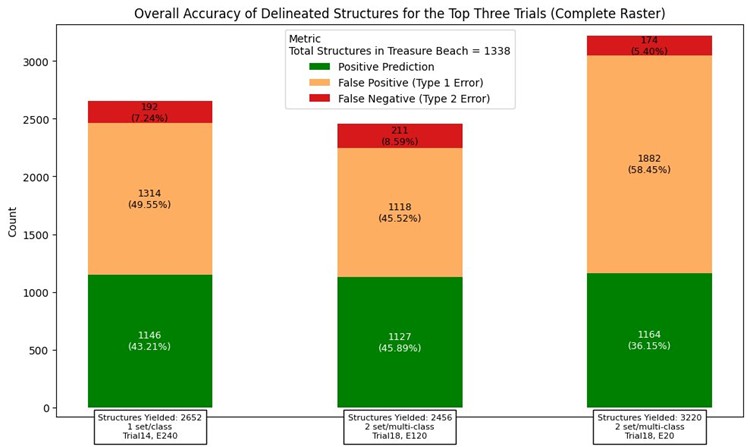

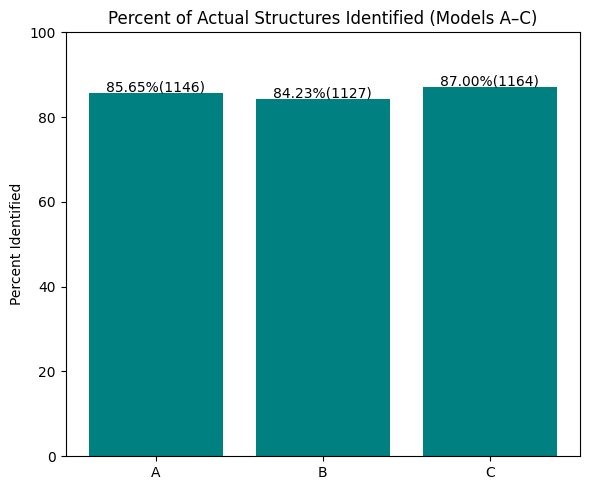

Used 2 different types of raster resolution 2cm and 10 cm, there were a total of 1,338 structures consisted in the raster, all of them manually digitized.

2 cm

- Models produced better output (89% correctly mapped).

- Higher false positives (56%).

- Some models mistook vehicles and boats for structures.

10 cm

- Good output (82% correctly mapped).

- Much fewer false positives (12%) compared to 2 cm.

We focused on the 2 cm raster because it’s easier to delete false positives than to manually map the remaining structures. False positives can be reduced significantly through custom‑trained models with a large number of epochs.

Methods

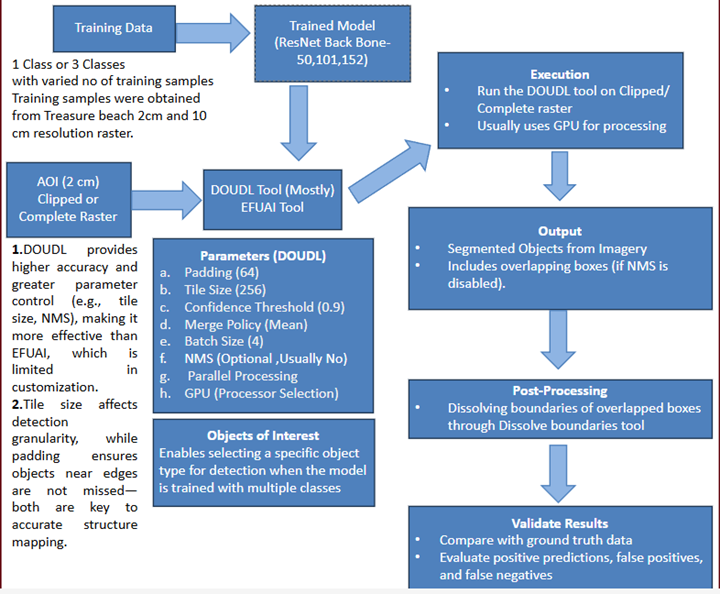

Workflow overview

Pre-trained Models and Tools

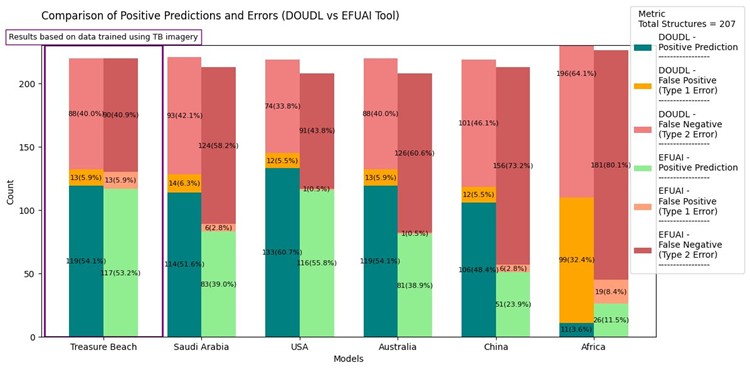

Initially tried using pre-trained models, the deep learning training datasets were obtained from ArcGIS Living Atlas . Some of the models tested include:

- Building Footprint Extraction – USA

- Building Footprint Extraction – Australia

- Building Footprint Extraction – KSA

- Building Footprint Extraction – New Zealand

- Building Footprint Extraction – Africa

- Building Footprint Extraction – China

Modeling

Backbone Architecture: ResNet50, ResNet101, ResNet152

Model Type: Mask R-CNN

- Max Epochs: 240

- Batch Size: 4

- Chip Size: 256

1. Overview

This study aims to automate the delineation of structures using deep learning tools available in ArcGIS Pro to support flood risk assessment in Treasure Beach, Jamaica. The primary tools utilized for object detection and feature extraction were Detect Objects Using Deep Learning and Extract Features Using AI Models. The model was trained using different training samples and executed using various parameter configurations to evaluate efficiency, completeness, and accuracy.

2. Tools Used for Structure Detection

A. Extract Features Using AI Models (GeoAI Toolset)

-

Purpose:

- This tool, part of the GeoAI tools set, allows the execution of deep learning models to detect and extract features from drone imagery.

- Unlike "Detect Objects Using Deep Learning," it provides a more straightforward execution process with fewer parameter optimization options.

- Generates outputs that do not require additional post-processing to merge overlapping detections.

- Initially ran using Area of Interest (AOI) option, but processing time was extensive.

- Switched to Clipped Raster to reduce computation time while maintaining accuracy.

- Mode: Set to Infer and Post Process, meaning that the model first makes predictions (Infer) and then refines the results in a post-processing step.

- Post-Processing Workflow: Applied Polygon Regularization to smooth and refine detected structure boundaries.

- Confidence Threshold: Set to 90%, ensuring only high-confidence detections are retained.

- GPU Processing: Utilized GPU acceleration to improve execution efficiency.

- Less efficient compared to "Detect Objects Using Deep Learning".

- Limited flexibility in parameter tuning, affecting optimization potential.

Processing Approach:

Limitations:

B. Detect Objects Using Deep Learning (Image Analyst Toolset)

-

Purpose:

- Part of the Image Analyst toolset, providing a more advanced execution framework with greater control over Tile size, padding, and Non-Maximum Suppression (NMS).

- Produces more accurate structure delineations compared to "Extract Features Using AI Models".

- Initially focused on Area of Interest (AOI) option, but processing time was long.

- Switched to Clipped Raster to enhance performance.

Processing Approach:

Key Considerations:

- Without NMS: Produces more overlapping bounding boxes requiring post-processing using the Dissolve Boundaries tool.

- With NMS: Eliminates redundant bounding boxes, refining object delineation and reducing post-processing needs.

Objects of Interest Parameter:

- Specifies the object names to be detected, based on the Model Definition parameter.

- This parameter is only active when the model detects more than one type of object.

- When the model was trained with multiple classes, this parameter provided different options to select from various object types.

- Available classes included Finished Buildings, Sheds, Unfinished Buildings, and Structures.

- Due to the hybrid training strategy, different models provided three different options each time, selecting from the four available classes.

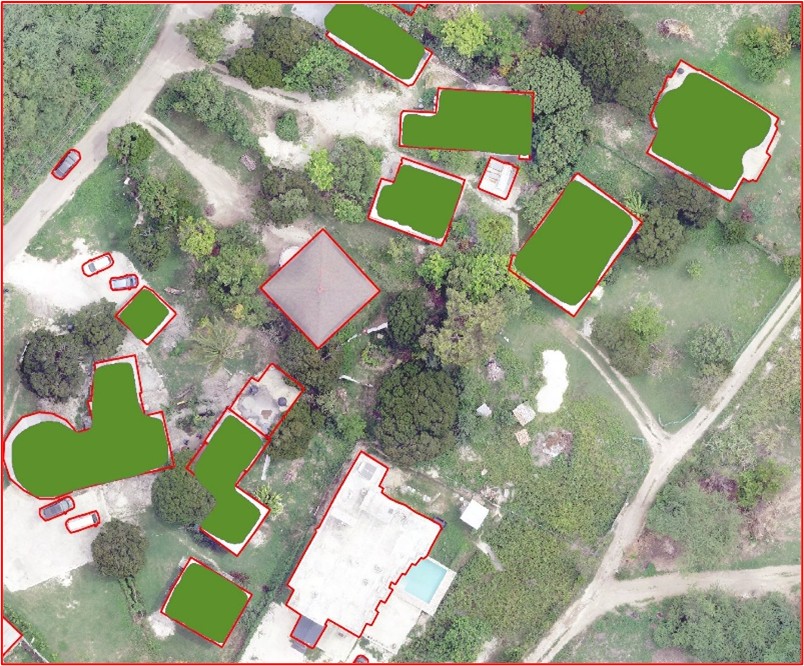

Pretrained USA model downloaded from ArcGIS Living Atlas, Executed through Extract Features Using AI Models, Comparing with manually mapped set , Executed through Detect Objects Using Deep Learning, without Non-Maximum Suppression, resulting in overlapping boxes, which were later merged using dissolve boundaries tool. Comparing with manually mapped set. Same model was executed through 2 different tools.

Problems with 2cm Resolution Raster

Multiple small mappings of same structures same structure was mapped in small portions instead of a single chunk, making more entries into the attribute table, giving an incorrect count about the number of structures in the raster set. Custom trained models reduced these mistakes significantly.

Mistook Boats and Vehicles for Buildings

Poor representation of area, perimeter.

All problems were addressed using custom-trained models.

Commonly Used Parameter Settings

- Padding (64): Determines the overlap between tiles during processing to ensure objects near tile edges are properly detected without being cut off.

- Batch Size (4): Controls the number of images processed simultaneously, balancing performance and memory usage.

- Threshold (0.9): Sets the minimum confidence score required for an object to be classified as a valid detection, filtering out lower-confidence detections.

- Return BBoxes (False): Specifies whether bounding boxes should be included in the output. Since object segmentation was prioritized, this was set to False.

- Test Time Augmentation (False): Prevents additional transformations such as flipping or scaling during inference, ensuring consistency in predictions.

- Merge Policy (Mean): Determines how overlapping detections from different tiles are merged, with "mean" averaging the predictions for improved accuracy.

- Tile Size (256): Defines the size of image tiles processed at a time, affecting detection granularity and computational efficiency.

- Parameters Not Frequently Used:

- NMS

- Use Pixel Space: This parameter determines whether to use pixel-based coordinates instead of geographic coordinates for bounding boxes, affecting how objects are aligned and merged during detection.

- Raster Analysis Settings:

- Parallel Processing Factor: 100%

- Cell Size: Set to Maximum of Inputs

- Processor Type: GPU

3. Training Data & Samples

A. Training Data Variations

To improve detection accuracy, different datasets were created:

-

Multi-Class Training Data:

- Classified into Finished Buildings, Sheds, and Unfinished Structures.

- Helps distinguish different structure types but may introduce classification confusion.

-

Single-Class Training Data:

- Treated all structures as a single category ("Structures").

- Achieved better accuracy by focusing purely on object detection rather than classification.

B. Training Sample Size

- Training datasets varied from 20 to 163 samples, tested to assess the impact of dataset size on model accuracy.

- Different pre-trained models from ArcGIS Living Atlas were used for comparative evaluation (e.g., USA, Australia, New Zealand models).

4. Model Training & Execution

A. Deep Learning Model Configuration

- Backbone Architecture: ResNet-50, ResNet-101, ResNet-152

- Training Epochs: 20 vs. 120 Epochs tested

- Model Evaluation:

- Shorter training (20 epochs): Higher recall but more false positives.

- Longer training (120 epochs): Improved precision with fewer false positives but slight loss in recall.

B. Parameter Optimization & Execution

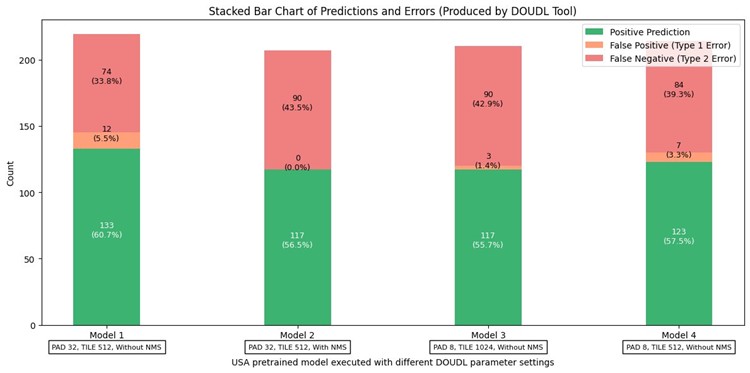

Tile and Padding Size

- Smaller Tiles (512px):

- Provided finer object detection with better localization.

- Increased overlapping bounding boxes when NMS was disabled.

- Larger Tiles (1024px):

- Captured broader regions but slightly reduced detection accuracy.

Non-Maximum Suppression (NMS)

- Without NMS:

- More detections but required post-processing via Dissolve Boundaries Tool.

- With NMS:

- Reduced redundancy in overlapping bounding boxes.

- Ensured each structure was uniquely delineated, minimizing the need for post-processing.

5. Post-Processing & Validation

- Dissolve Boundaries Tool: Used to merge overlapping boxes when NMS was disabled.

- Model Accuracy Testing:

- Evaluated against manually labeled structures.

- Compared results with random structures from Open Street Map to measure detection reliability.

Random Structures from Open Street View to assess the accuracy of the model.

To test the accuracy of the model, 100 random structures from open street view map (top) were selected to see whether the model mapped (bottom) those structures or not.

Results

This graph compares the completeness of the AI/ML mapped structures between our training data set for Treasure Beach (left) versus the publicly available models we obtained that were trained for various geographic areas. The bottom graph compares the results of different combinations of tool parameters that vary the Pad, Tile, and NMS.

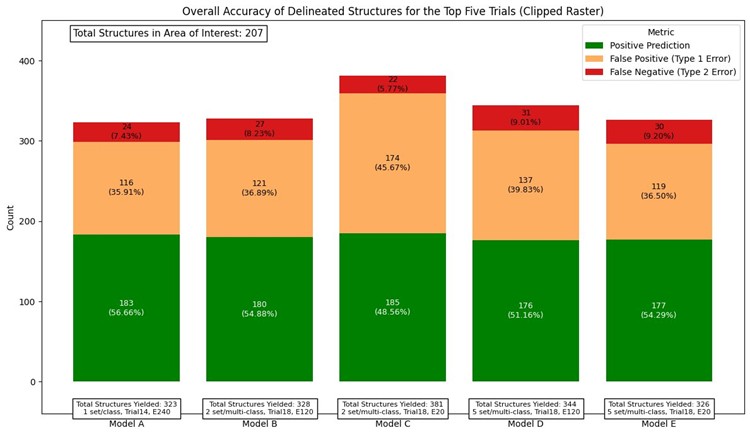

Summary of Model Performance (Clipped Raster Execution)

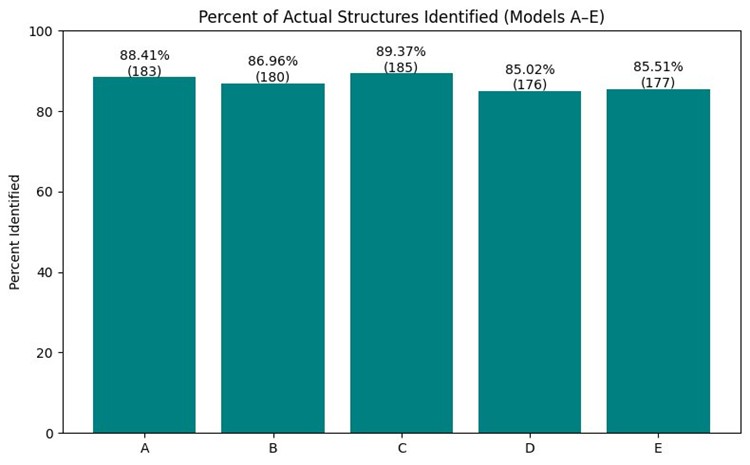

Five deep learning models (labeled A through E) were executed using clipped raster inputs to evaluate structure detection performance. Each model was trained using a combination of different class configurations and training samples.

All models were assessed based on the percentage of actual ground-truth structures identified. Model C achieved the highest detection rate at 89.37%, followed by Model A at 88.41%. Models B, D, and E demonstrated slightly lower but still competitive accuracy levels, ranging from approximately 85% to 87%.

- Model A: From 14th trial, trained for 240 epochs, model was trained with 135 image samples, consists of only one class – “Structures”.

- Model B: From 18th trial, trained for 120 epochs, model was trained with 163 image samples, consists of only one class – “Structures” and 50 samples of 3 classes “Finished Buildings”, “Sheds”, “Unfinished Structures”.

- Model C: Training data and classes same as model B but trained only for 20 epochs.

- Model D: From 18th trial, trained for 120 epochs, 50(2cm), 57(10cm) Samples of 3 classes 163, 105(10cm), 33 Samples(2cm) of Structures (1class).

- Model E: Training data and classes same as model D but trained only for 20 epochs. All models were configured with a tile size of 256 and padding of 64.

Each model used the ResNet-152 backbone architecture, known for its deep residual learning and strong performance in object detection tasks. Most of the training samples are from 2cm resolution raster. All Models were executed 2cm resolution raster. Detect Objects Using Deep Learning was used for all runs with GPU acceleration, clipped raster inputs, and optimized settings. The use of clipped raster helped reduce processing time while maintaining high detection accuracy. Overall, the comparative results demonstrate how model architecture, training class strategy, number of epochs, and parameter tuning impact structure detection outcomes in AI-assisted mapping workflows.

6. Conclusion

- Detect Objects Using Deep Learning produced more optimized outputs compared to Extract Features Using AI Models, especially when used with NMS.

- Training with single-class data resulted in better accuracy compared to multi-class datasets.

- Parameter tuning (tile size, padding, and NMS) played a crucial role in refining structure delineations.

- Longer training improved precision but required balancing recall to avoid missing structures.

This methodology provides a structured approach to AI-based structure mapping, improving flood risk assessment through automation and optimization.

Optimal Approach to Delineating Structures

The optimal solution for delineating structures in the Full AOI was using a 2 cm resolution image and DOUDL tool. The optimal parameters included a Tile Size of 256, Padding of 64, and a Confidence Threshold of 0.90 (90%). The model was trained using the ResNet-152 architecture with a single class labeled ‘Structures’, as multi-class training introduced confusion and reduced model performance. Other key settings included a Batch Size of 4, Non-Maximum Suppression (NMS) disabled, GPU processing, and Cell Size set to ‘Maximum of Inputs’.

Future Ideas

Future plans include enhancing the model's classification capabilities by introducing more distinct object categories beyond structures. These may include vehicles, boats, roads, and vegetation, alongside existing categories such as Finished Buildings, Sheds, and Unfinished Structures. This would allow the model to better distinguish between man-made and natural features in complex imagery.

Additionally, training new models with a higher number of epochs and larger, more diverse datasets is anticipated to reduce false positives and improve overall model robustness.

Exploration of alternative machine learning algorithms—such as decision trees and random forest classifiers— may also be undertaken to assess their effectiveness in supplementing or refining deep learning outputs, particularly in post-processing and classification tasks. This demonstrates how model architecture, training class strategy, number of epochs, and parameter tuning impact structure detection outcomes in AI-assisted mapping workflows.

Acknowledgments

References

He, K., Zhang, X., Ren, S., & Sun, J. (2016).

Deep residual learning for image recognition.

Proceedings of the IEEE conference on computer vision and pattern recognition, 770–778.

(For ResNet-152)